From Video: Z-Image Base is out! Best local AI image model

From Video: Z-Image Base is out! Best local AI image model

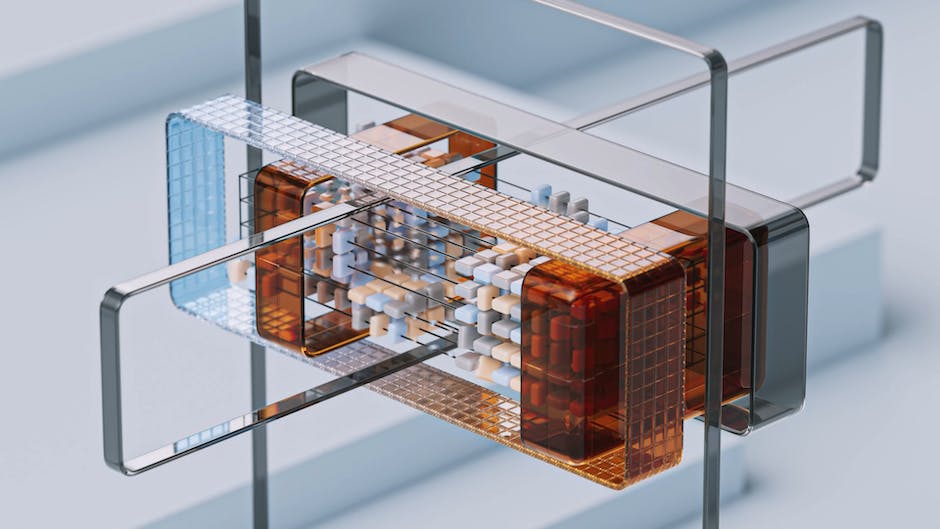

Alibaba's Tongyi Lab released the full Z-Image model. This open source tool sets a new standard for AI image generation. It is an undistilled transformer model. This means it offers more variety and better artistic control than earlier versions.

The Difference Between Full and Turbo Models

The Z-Image Turbo model focuses on speed. It only needs 8 to 10 steps to make an image. However, it lacks diversity. Different seeds often produce similar results. The full Z-Image model solves this. It creates unique characters and layouts every time you change the seed. It also supports negative prompts. You can tell the model exactly what to leave out of your image.

Z-Image vs Flux

Tests show that Z-Image excels in areas where other models like Flux struggle. Z-Image is better at creating famous people and anime characters. It also handles text rendering with high accuracy. In artistic tests, Z-Image captured the style of Monet and Chinese watercolor more effectively. It creates authentic brushstrokes. The model also follows complex instructions for human anatomy very well.

Running Z-Image Locally

You can run Z-Image on your own computer using ComfyUI. You need three files from Hugging Face: the UNET model, the CLIP text encoder, and the VAE model. The full model is about 12GB. It requires a powerful graphics card. If your hardware is older, you can use the smaller GGUF versions. These range from 4GB to 8GB.

Advanced Tools and LoRA Training

Z-Image works for more than just text prompts. You can use it for image-to-image tasks. This allows you to turn a sketch into a realistic photo. You can also use inpainting to fix specific parts of a picture. Many creators use Z-Image as a base to train custom styles. New tools even allow you to create a temporary style from just a few images in minutes.